How to find duplicated words in a text file

Table of Contents

You can find and remove duplicated words using one-line commands.

If you want to find duplicated files, check Finding duplicated files: several command-line methods.

Duplicated words on the same line

Let’s assume we have this text file:

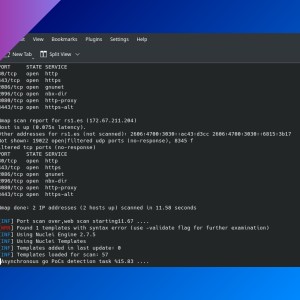

$ cat dupl.txt

Hi, how are are you?

I'm fine, thanks.

I'm working on on a new project.We have some duplicated adyacent words, like ‘are’ and ‘on’. You can use awk (GNU awk) to find these words and delete them.

awk -v RS='[[:space:]]' '$1 != D {printf "%s", $1 RT; D=$1}' < dupl.txt > new.txt-v RS='[[:space:]]': assign variable RS (the input record separator, by default a newline) to the ‘space’ character.'$1 != D: means “if the word is not equal to ‘D’”, a variable that is defined at the end.{printf "%s", $1 RT;: print the word and the variable ‘RT’ (the record terminator. Gawk sets RT to the input text that matched the character or regular expression specified by RS, in this case the ‘space’ character).D=$1: assign ‘D’ to the word, so in the next iteration it can check if the word is the same.

This assumes that every line ends in a newline character, If not, you can modify the command to add the newline character manually:

awk -v RS='[[:space:]]' '$1 != D {printf "%s", $1 (RT?RT:ORS); D=$1}' < dupl.txt > new.txtDuplicated words on one-word-per-line files

We have a wordlist file like this one:

$ cat wordlist.txt

one

two

three

two

fourWe want to remove repeated words, in this case ‘two’. If you don’t mind about the line order, you can run sort.

sort -u wordlist.txt > newwordlist.txtIf repeated words are always in adyacent lines, you can run uniq:

uniq wordlist.txt > newwordlist.txtIn order to keep line order, we can use cat -n to print the line number, then sort the words and remove the duplicates, and finally reorder the lines.

cat -n wordlist.txt | sort -k2 | uniq -f1 | sort -n | cut -f2If you have any suggestion, feel free to contact me via social media or email.

Latest tutorials and articles:

Featured content: